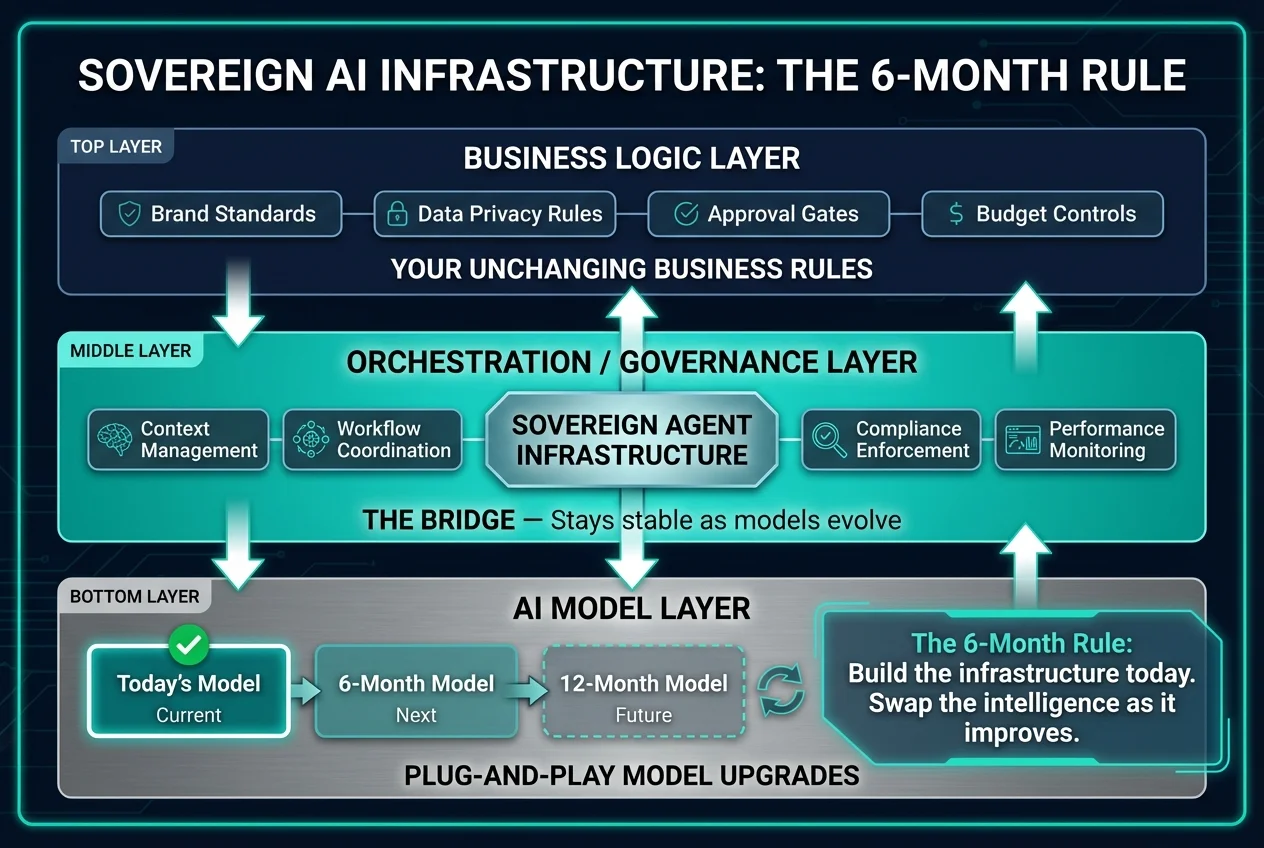

An effective AI strategy requires building infrastructure for the model capabilities of six months from now — not for the limitations of today. The pace of Large Language Model (LLM) innovation creates a common paralysis for operations leaders: build with today's imperfect tools, or wait for the next breakthrough? Insights from Anthropic's Claude Code development team reveal a counterintuitive answer — the 6-month rule — that reframes this decision entirely.

For mid-market and scaling companies, this approach changes the calculus of automation. It suggests that the biggest risk isn't that AI isn't ready yet, but that organizations are optimizing their workflows for limitations that are about to vanish. Here is how to apply this forward-thinking logic to your operational infrastructure.

The AI strategy 6-month rule: build for the future

The core philosophy driving leading AI development, including the creation of tools like Claude Code, is remarkably counterintuitive: do not build for the model of today. Instead, build for the model of six months from now.

When Boris Cherny was developing early iterations of coding agents, the technology wasn't actually capable of coding effectively yet. It was a grind of sleepless nights and prototypes that felt functionally useless. However, the strategy was to identify the "frontier" — the specific tasks the model was currently bad at — and build the infrastructure to handle them, assuming the model's intelligence would catch up.

For operations leaders, this is a strategic directive. If you are holding back on deploying agentic workflows because models currently struggle with specific nuances — perhaps complex reasoning over long contexts or maintaining state over weeks — you are falling behind. By the time you architect the perfect solution for today's constraints, the constraints will have shifted. Companies that embrace this mindset are already scaling revenue without proportional headcount growth by building the infrastructure first and letting model improvements amplify their returns.

Successful AI adoption requires decoupling your infrastructure from the model's current IQ. You must build the "body" of your operations (the integrations, the permissions, the governance) today, knowing that the "brain" (the model) will be swapped out for a smarter version shortly.

Legacy interfaces: why the terminal must die

A major friction point in current AI adoption is the persistence of legacy interfaces. There is a sense of disbelief among innovators that we are still using terminals and command-line interfaces as primary tools. These were intended as starting points for computing history, not the permanent endpoint.

This observation validates a massive shift occurring in business operations. For decades, "automation" meant forcing humans to learn the language of machines — learning SQL, navigating complex ERP dashboards, or understanding API calls. The future of operations is the inverse: machines learning the language of humans. This same paradigm shift is driving the move from traditional SaaS interfaces toward agentic workflows that understand intent rather than require menu navigation.

In a customer support automation or sales context, this means the end of rigid, menu-driven software. Ops leaders should stop buying tools that require their teams to act like computer engineers. Instead, the focus must shift to natural language interfaces where the outcome is requested, and an agent navigates the technical complexity.

The friction of the "terminal" — whether that's a literal command line or just a clunky, field-heavy CRM interface — is the bottleneck. The 6-month rule implies that while natural language agents might feel clunky today, they are the inevitable interface. Investing in training your team on legacy, hard-coded software interfaces is a depreciating asset.

The operational risk of optimizing for today

There is a hidden danger in ignoring the 6-month trajectory: technical debt born from over-optimization. When companies build automation based strictly on what models can do right now, they often build elaborate scaffoldings to support the model's weaknesses.

For example, if a model hallucinates easily, engineers might build complex, rigid validation chains. If a model has a short memory, they might chop data into tiny, fragmented pieces. These are workarounds for temporary problems.

When the next generation of models arrives six months later — with massive context windows and superior reasoning — those rigid workarounds become liabilities. They restrict the smarter model from doing its job. You are left with a legacy architecture built for a "dumber" AI.

To avoid this, operations strategy must focus on governance and outcome definition rather than micromanaging the execution steps. Define what "good" looks like, establish the guardrails (data sovereignty, budget limits, approval gates), and let the agent handling the logic remain flexible. This governance-first mindset is why AI governance has become a CEO-level responsibility — the stakes of getting it wrong compound with every model upgrade. By keeping your operational workflow adaptable, when the underlying model improves your systems automatically become more efficient without requiring a rebuild.